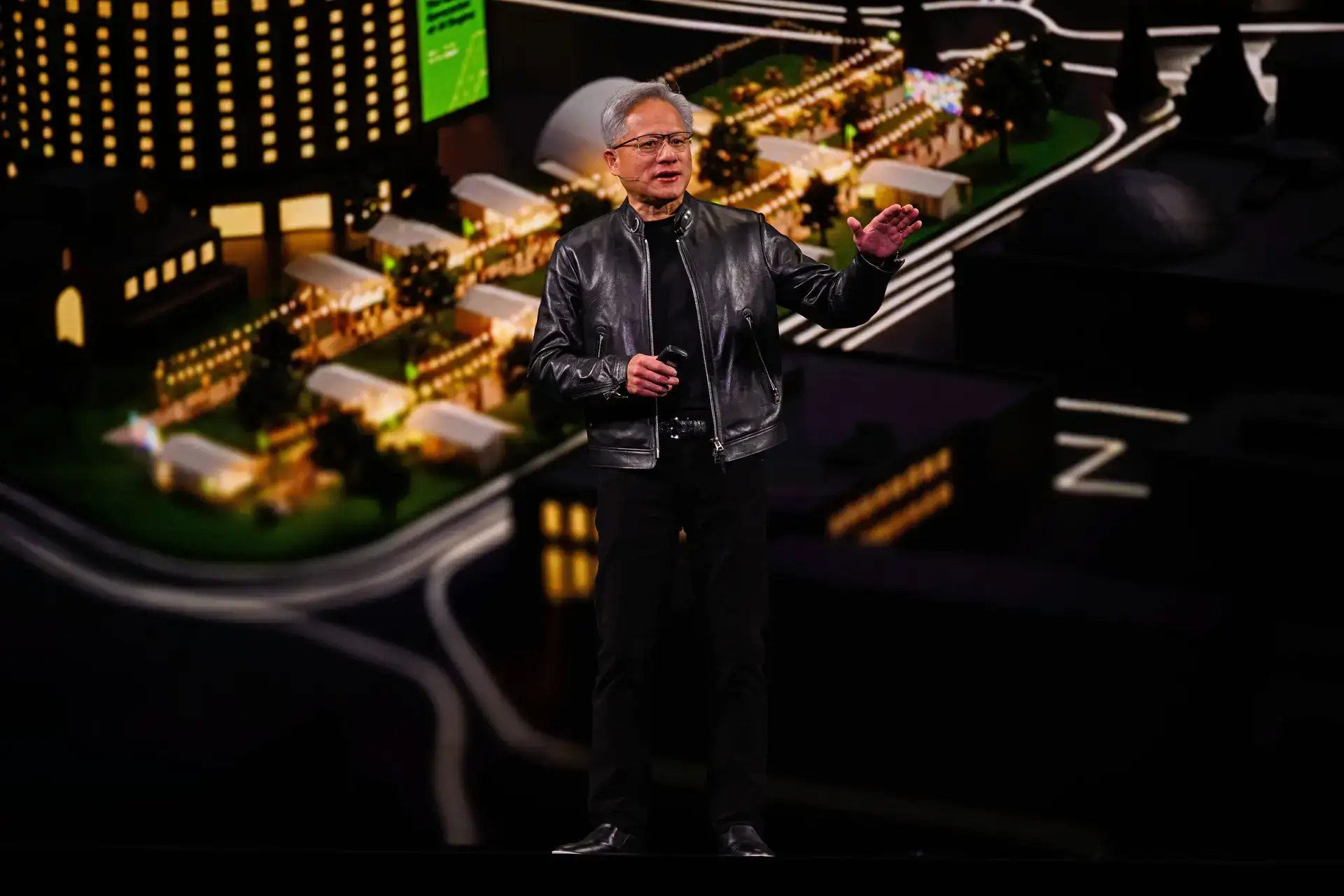

Nvidia CEO Jensen Huang has started his keynote at the chipmaker's annual developer conference in San Jose, California, where he is set to detail its hardware and software plans.

Shares of Nvidia - the world's most valuable listed company with a market capitalization of more than $4.3 trillion - were up 2.4% âon Monday as â investors awaited â the announcements.

At a hockey arena with a capacity of more than 18,000, Huang is expected to lay out how the top AI chipmaker âplans to adapt to a rapidly changing AI landscape at the four-day conference.

He started the keynote by making the argument that part âof Nvidia's competitive advantage was its Cuda chip programming software, which some analysts regard as its strongest shield.

"The installed base is what attracts developers who then create (the) new algorithms that achieve the breakthrough" technologies, Huang said. "We are in every cloud. We're âin every computer company. We serve just about every single industry."

The keynote is â also likely âto include detailon a next-generation AI chip called Feynman, named after late American physicist Richard Feynman.

Huang âis also likely âto talk about data centers, Nvidia's chip programming software CUDA, digital assistants known as AI agents â and physical AI such as robots.

This year's event is even more crucial âas investors will seek assurance that Nvidia's strategy of plowing back its profits into the âAI ecosystem is paying off.

Another focus is likely to be Groq, a chip startup from which Nvidia licensed technology for $17 billion in December. Groq specializes in fast and cheap "inference" computing work, in which an AI model takes what it has already learned and uses it to answer a question or make a prediction in real time.

After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Facebook âowner Meta Platforms are shifting toward serving hundreds of millions of users who are tapping those AI systems.

Nvidia faces greater competition in the market for chips for inference-computing work than it does for âAI-training chips, and analysts âexpect the company to shore â up its defenses against rivals looking to regain market share they lost to Nvidia in recent years.

Analysts also expect Nvidia to elaborate on why it invested $2 billion each in Lumentum and Coherent, both of which make lasers for sending information âbetween chips in the form of beams of light.

Despite that increased competition, some of which is coming from Nvidia's own customers designing their own chips, Nvidia remains central to the global AI ecosystem.

Nations such as Saudi Arabia are building custom AI systems for their own populations using its chips, and it is one of the only large U.S. companies that continues to release open-source AI software, a growing field of competition between the U.S. and China.

Shares of Nvidia - the world's most valuable listed company with a market capitalization of more than $4.3 trillion - were up 2.4% âon Monday as â investors awaited â the announcements.

At a hockey arena with a capacity of more than 18,000, Huang is expected to lay out how the top AI chipmaker âplans to adapt to a rapidly changing AI landscape at the four-day conference.

He started the keynote by making the argument that part âof Nvidia's competitive advantage was its Cuda chip programming software, which some analysts regard as its strongest shield.

"The installed base is what attracts developers who then create (the) new algorithms that achieve the breakthrough" technologies, Huang said. "We are in every cloud. We're âin every computer company. We serve just about every single industry."

The keynote is â also likely âto include detailon a next-generation AI chip called Feynman, named after late American physicist Richard Feynman.

Huang âis also likely âto talk about data centers, Nvidia's chip programming software CUDA, digital assistants known as AI agents â and physical AI such as robots.

This year's event is even more crucial âas investors will seek assurance that Nvidia's strategy of plowing back its profits into the âAI ecosystem is paying off.

Another focus is likely to be Groq, a chip startup from which Nvidia licensed technology for $17 billion in December. Groq specializes in fast and cheap "inference" computing work, in which an AI model takes what it has already learned and uses it to answer a question or make a prediction in real time.

After spending hundreds of billions of dollars in recent years on chips for training their AI models, companies such as OpenAI, Anthropic and Facebook âowner Meta Platforms are shifting toward serving hundreds of millions of users who are tapping those AI systems.

Nvidia faces greater competition in the market for chips for inference-computing work than it does for âAI-training chips, and analysts âexpect the company to shore â up its defenses against rivals looking to regain market share they lost to Nvidia in recent years.

Analysts also expect Nvidia to elaborate on why it invested $2 billion each in Lumentum and Coherent, both of which make lasers for sending information âbetween chips in the form of beams of light.

Despite that increased competition, some of which is coming from Nvidia's own customers designing their own chips, Nvidia remains central to the global AI ecosystem.

Nations such as Saudi Arabia are building custom AI systems for their own populations using its chips, and it is one of the only large U.S. companies that continues to release open-source AI software, a growing field of competition between the U.S. and China.